Replicate

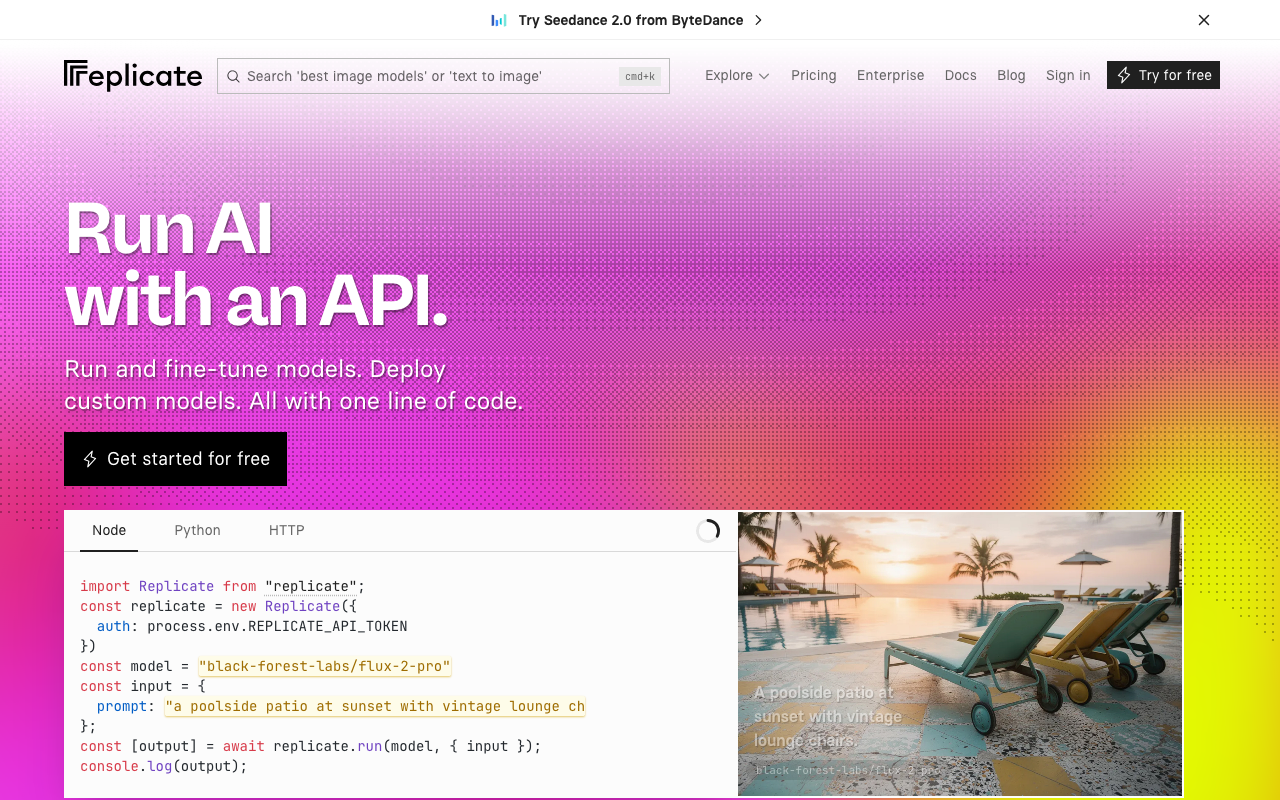

Multi-model inference API for open-source ML with per-second pricing and one-command deploys.

Description

Replicate is the inference platform that popularized the idea of running open-source ML models as HTTP endpoints. In 2026 it offers thousands of public models (FLUX, SDXL, Whisper, LLaMA, Stable Video, Claude, GPT via proxy, TTS, embeddings, ControlNets) with Python and JavaScript SDKs, billing per GPU-second (from $0.000025/s on small CPU to $0.0112/s on 8x A100) or per output on curated models (FLUX Pro $0.04/img, Claude 3.7 Sonnet $3/M input tokens). It lets you upload your own models packaged with Cog, fine-tune LoRAs, and expose them as private endpoints. There's no subscription: you pay as you go with some initial credit after signup and an enterprise option for high volumes.

Preview

Detailed Evaluation

Key advantages

Huge catalog of open-source models

Thousands of community and official models ready to run with a single HTTP call, from image to audio, video, and text.

Cog: package your own model

The Cog tool lets you turn any Python model into a reproducible image and publish it as an endpoint in minutes.

Granular per-second pricing

You pay exactly the GPU time your model consumes, with configurable hardware from small CPU to 8x A100.

Accessible fine-tuning

Launching fine-tunes (FLUX LoRAs, SDXL, etc.) is a single API call and results deploy automatically.

Excellent JS and Python DX

The official SDKs are minimalist and make streaming, webhooks, and job cancellation trivial to integrate.

Limitations to consider

Cold starts and variable latency

Less popular models can take tens of seconds to start the first time, poorly tolerated by user-facing apps.

Billing easy to underestimate

Stacking 8x A100 seconds for video scales quickly, and without good limits you can see surprise bills.

Inference speed lower than Fal

For popular diffusion models, Fal is often several times faster thanks to its optimized runtime, penalizing Replicate in production.

Token free tier

Initial credits are enough to try things, but not to prototype seriously without entering a card.

Standout Feature

The combination of a massive open-source model catalog plus Cog for packaging your own is unique: in 2026 Replicate remains the simplest way to go from a research repo to a production HTTP endpoint.

Comparison with Alternatives

Against Fal it's more flexible and has a more open catalog but is slower on diffusion; against Hugging Face Inference Endpoints it has better DX and more granular per-second billing; against Modal or Runpod it gives up low-level GPU control in exchange for brutal simplicity.

Ideal User

Developers and researchers who want to experiment with open-source ML models, package their own with Cog, and ship them to production without running their own infrastructure. Also fits products that need occasional access to dozens of different models.

Learning Curve

Running a model is as simple as calling 'replicate.run'. The curve appears when packaging with Cog, optimizing cold starts, doing serious fine-tuning, or containing costs on heavy models.

Best For

- Developers who want to try open-source models without provisioning GPUs

- Products integrating image generation with FLUX or SDXL

- ASR+TTS pipelines with Whisper and open-source voice models

- Teams packaging their own models with Cog and exposing them as APIs

- Quick LoRA fine-tuning and immediate deployment as an endpoint

Not Ideal For

- Cases with hard sub-second latency requirements on diffusion (Fal is ahead)

- Zero-budget projects where a generous free tier is essential

- Workloads very sensitive to fine-grained hardware control